The AI Arms Race – Why Unified Exposure Management Is Becoming a Boardroom Priority

AI-Powered Cyberattacks Escalate: Unified Exposure Management is Now Critical

For General Readers (Journalistic Brief)

The digital world is facing a significant new threat: cybercriminals are increasingly using Artificial Intelligence (AI) to launch more sophisticated and faster attacks. This means that the usual ways we protect ourselves online might not be enough anymore. Think of it like a race where attackers are getting super-powered tools, and defenders need to upgrade their own defenses just to keep up.

These AI-driven attacks can do several alarming things. They can automate the process of finding and exploiting weaknesses in computer systems, making it much harder for security teams to react. AI is also being used to create incredibly convincing fake emails and messages, making it easier for attackers to trick people into giving up sensitive information or downloading malware. Furthermore, AI can help create "polymorphic" malware that constantly changes its appearance, making it very difficult for traditional antivirus software to detect.

The core problem is that these AI-powered attacks are happening at a speed that human-led security operations can struggle to match. Traditional methods of identifying and fixing security problems, which often involve manual checks and scheduled updates, are becoming too slow. This is why experts are calling for a more proactive and automated approach to cybersecurity.

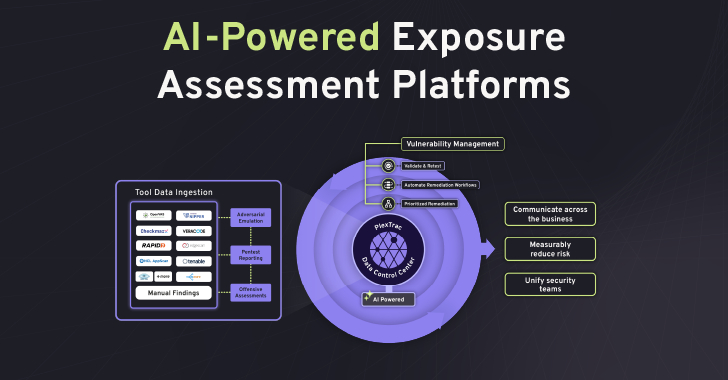

To combat this evolving threat, organizations need to adopt advanced, AI-powered defensive strategies. This includes constantly assessing their digital "exposure" – all the potential ways they could be attacked – and continuously monitoring for new threats. A key solution being recommended is "unified exposure management," which essentially means bringing together all the information about vulnerabilities, threats, and risks into one place. This allows security teams to quickly understand what the biggest dangers are and prioritize fixing them before attackers can exploit them.

Technical Deep-Dive

1. Executive Summary

The cybersecurity landscape is undergoing a paradigm shift driven by the adversarial adoption of Artificial Intelligence (AI). Threat actors are leveraging AI to automate complex attack chains, execute highly targeted and scalable social engineering operations, and develop polymorphic malware capable of evading traditional signature-based detection mechanisms. This evolution renders conventional, point-in-time defensive strategies and manual incident response processes increasingly ineffective. To counter these AI-enabled adversaries, organizations must adopt AI-powered defensive capabilities, with a strong emphasis on Autonomous Exposure Assessment and Continuous Threat Assessment. Unified exposure management platforms are identified as critical tools for consolidating vulnerability data, prioritizing risks based on contextual intelligence, and streamlining remediation workflows to match the accelerated pace of modern cyber operations.

- CVSS Score: Not applicable. The article describes a general trend and the impact of AI on threat actor capabilities, not a specific, quantifiable vulnerability with a CVE identifier.

- Affected Products: Not applicable. The article discusses a broad category of threats and defensive strategies, not specific software products.

- Severity Classification: Critical. The described trend indicates a fundamental shift in the threat landscape, posing an existential challenge to current cybersecurity paradigms and necessitating immediate strategic adaptation.

2. Technical Vulnerability Analysis

- CVE ID and Details: Not applicable. The article describes a trend and the impact of AI on threat actors' capabilities, not a specific, cataloged vulnerability.

- Root Cause (Code-Level): Not applicable. The article does not detail specific code-level vulnerabilities. The "root cause" of the accelerated threat landscape is the application of AI techniques by adversaries to existing attack methodologies and the development of novel evasion techniques. This includes:

- AI-driven Automation of Exploit Chaining: Adversaries use AI to analyze reconnaissance data, identify potential vulnerability chains, and orchestrate exploitation steps more rapidly than human operators. This leverages principles of graph traversal, reinforcement learning, and optimization applied to vulnerability graphs and attack path modeling.

- Generative AI for Social Engineering: Large Language Models (LLMs) and other generative AI are employed to craft highly convincing, context-aware phishing lures, increasing the success rate of initial access vectors. This bypasses human susceptibility to generic phishing by exploiting personalized information gleaned from OSINT or prior breaches. Techniques include dynamic generation of email content, subject lines, and even voice synthesis for vishing.

- AI for Polymorphic Malware Development: Machine learning models are employed to generate malware variants that dynamically alter their code structure, obfuscation techniques, and execution patterns to evade signature-based detection and behavioral analysis. This can involve techniques such as:

- Instruction Substitution: Replacing benign instruction sequences with functionally equivalent but syntactically different ones (e.g.,

ADD EAX, 0vs.XOR EAX, EAX). - Code Obfuscation: Employing techniques like control flow flattening, opaque predicates (e.g.,

if (x*x >= 0) { ... }), and encryption/decryption routines that change with each execution (e.g., using a unique key derived from system entropy). - Dynamic Payload Generation: Generating or retrieving malicious payloads on-the-fly based on environmental factors (e.g., presence of EDR, specific OS version) or network conditions.

- Instruction Substitution: Replacing benign instruction sequences with functionally equivalent but syntactically different ones (e.g.,

- AI for Defensive Posture Analysis: Adversaries use AI to analyze defensive configurations, identify weak points, and predict detection mechanisms, enabling them to tailor their attacks for maximum stealth and impact. This can involve automated scanning for common misconfigurations or analyzing network traffic patterns to infer security control effectiveness.

- Affected Components: Not applicable. The impact is on the overall cybersecurity posture and the effectiveness of existing defenses across all IT environments.

- Attack Surface: The attack surface is broadened and intensified by AI. It encompasses:

- Human Element: Increased susceptibility to sophisticated, AI-generated social engineering attacks that exploit psychological vulnerabilities more effectively than generic templates.

- Software Vulnerabilities: Existing and newly discovered vulnerabilities are exploited at an accelerated pace due to AI-driven reconnaissance, vulnerability discovery (e.g., fuzzing with AI-generated inputs), and exploit development.

- Configuration Weaknesses: AI can identify and exploit misconfigurations in cloud environments (e.g., overly permissive IAM roles, exposed S3 buckets), network devices, and applications at scale.

- Detection Systems: Signature-based detection systems are directly challenged by AI-generated polymorphic malware. Behavioral detection systems are also challenged as AI can learn and mimic benign behaviors or adapt execution patterns to avoid triggering heuristics.

3. Exploitation Analysis (Red-Team Focus)

- Red-Team Exploitation Steps: The article describes a conceptual shift rather than specific exploit chains. However, a generalized AI-driven attack flow would look like this:

- AI-Powered Reconnaissance & Vulnerability Discovery: Adversary uses AI agents (e.g., trained on vulnerability databases, exploit code, and network scanning tools) to scan target networks, identify exposed services, enumerate software versions, and correlate this data with vulnerability databases (e.g., NVD, exploit-db) to identify potential entry points and exploit chains. This process is significantly faster and more comprehensive than manual methods, potentially identifying complex multi-stage attack paths.

- AI-Driven Phishing/Social Engineering: If initial access requires human interaction, AI (e.g., LLMs) generates highly personalized and contextually relevant phishing emails, messages, or voice calls. This includes tailoring language, tone, and content based on OSINT data about the target individual or organization, increasing the likelihood of credential theft or malware delivery.

- Automated Exploit Execution: Once a vulnerability or chain is identified, AI orchestrates the execution of exploits. This may involve selecting the most appropriate exploit for the target environment, dynamically adjusting exploit parameters (e.g., buffer sizes, target addresses), and managing the delivery of payloads. This can be done via automated exploit frameworks enhanced with AI decision-making.

- Polymorphic Payload Deployment: The delivered payload is designed to be polymorphic, dynamically altering its code, encryption, and execution patterns to evade detection by endpoint security solutions (AV, EDR). This could involve techniques like metamorphic code generation or runtime code mutation.

- AI-Assisted Post-Exploitation: AI can assist in lateral movement, privilege escalation, data exfiltration, and maintaining persistence by analyzing the compromised environment, identifying high-value targets (e.g., domain controllers, sensitive data stores), and automating the execution of subsequent actions. This might involve AI agents that learn network topology or analyze user behavior to identify optimal paths.

- Public PoCs and Exploits: Not applicable. The article discusses a trend, not a specific exploit.

- Exploitation Prerequisites:

- Vulnerable Systems: Presence of exploitable software or configuration weaknesses that AI can identify and target.

- Network Accessibility: Network paths allowing for remote exploitation or delivery of phishing lures. Firewalls and network segmentation are key defenses.

- Human Factor: For social engineering vectors, susceptibility of users to tailored attacks that bypass traditional security awareness training.

- Evasion Capabilities: Adversary must possess AI capabilities to generate polymorphic malware or mimic benign behaviors to bypass endpoint and network defenses.

- Automation Potential: High. The core premise of AI-driven adversaries is automation. Attacks can be significantly automated, from initial reconnaissance and vulnerability discovery to post-exploitation actions. This automation can be scaled to worm-like propagation if a suitable, widespread vulnerability is discovered and chained with AI-driven delivery mechanisms.

- Attacker Privilege Requirements: Varies significantly. Initial access can be achieved with unauthenticated remote exploits (e.g., RCE on exposed services), low-privilege user credentials (via sophisticated AI-driven phishing), or through supply-chain compromises. Post-exploitation privileges depend on the target and the attacker's objectives, with AI potentially assisting in privilege escalation techniques.

- Worst-Case Scenario:

- Confidentiality: Mass compromise of sensitive data across an organization, including intellectual property, customer PII, and financial records, due to rapid, widespread exploitation and automated exfiltration. AI can optimize exfiltration routes and data selection.

- Integrity: Critical systems could be corrupted or manipulated, leading to operational disruption, data integrity loss, or the deployment of ransomware at scale. AI could orchestrate complex, multi-stage destructive attacks that are difficult to reverse.

- Availability: Widespread denial-of-service conditions or complete system shutdowns due to ransomware or destructive attacks orchestrated by AI. The speed of AI-driven attacks could overwhelm manual incident response, leading to prolonged outages and significant business disruption.

4. Vulnerability Detection (SOC/Defensive Focus)

- How to Detect if Vulnerable: The article does not describe specific vulnerabilities, so direct detection of vulnerability status is not possible. However, the effects of AI-driven attacks and the conditions that enable them can be detected.

- Configuration Artifacts: While not directly indicating a specific vulnerability, monitoring for configurations that are known to be exploited by AI-driven tools (e.g., overly permissive cloud IAM roles, unpatched legacy systems, exposed management interfaces, weak authentication mechanisms) is crucial. Tools for cloud security posture management (CSPM) and vulnerability scanners are essential.

- Proof-of-Concept Detection Tests: Not applicable for a general trend. For specific AI-driven malware or exploit techniques, testing would involve analyzing their behavior in a controlled sandbox environment and developing detection rules based on observed artifacts and behaviors.

- Indicators of Compromise (IOCs): As the article doesn't specify a particular threat, general IOCs related to AI-driven attacks include:

- File Hashes: Unknown or rapidly changing hashes associated with polymorphic malware. YARA rules tuned for obfuscation patterns or specific AI-generated code structures might be effective.

- Network Indicators:

- Unusual communication patterns to newly registered domains or IP addresses associated with AI model training/command and control infrastructure.

- High volume of DNS lookups for obscure or dynamically generated domain names (e.g., using domain generation algorithms - DGAs).

- Suspicious traffic on non-standard ports, potentially used for covert communication by AI-driven agents or for exfiltration.

- Anomalous TLS certificate usage or self-signed certificates in unexpected contexts.

- Process Behavior Patterns:

- Processes exhibiting unusual API call sequences or a high rate of unique API calls, potentially indicating polymorphic malware or AI-driven evasion techniques.

- Unexpected process creation or termination events, especially those involving system processes or unusual parent-child relationships.

- Processes attempting to inject code into other processes (e.g., via

VirtualAllocEx,WriteProcessMemory,CreateRemoteThread). - Processes exhibiting self-modification or dynamic code generation in memory.

- Execution of unusual PowerShell commands or scripts, especially those involving reflection or obfuscation.

- Registry/Config Changes: Modifications to system configurations or registry keys that facilitate persistence (e.g., Run keys, Scheduled Tasks), privilege escalation, or evasion (e.g., disabling security services).

- Log Signatures: Anomalous patterns in security logs, such as:

- High volume of failed login attempts from unexpected sources or at unusual times.

- Unusual command-line arguments executed by system processes, especially those involving reconnaissance or evasion commands.

- Detection of known evasion techniques (e.g., disabling security tools via WMI, clearing event logs, manipulating security policies).

- SIEM Detection Queries:

- KQL Query for Anomalous Process Execution and API Call Sequences (Enhanced):

// Detects processes exhibiting a high volume of API calls and a large number of unique API calls, // potentially indicating polymorphic malware or AI-driven evasion techniques. // Requires robust endpoint telemetry (e.g., Sysmon EventID 11 for FileCreate, EventID 8 for ProcessAccess, and EDR API call logging). // This query focuses on process creation and its subsequent API activity. DeviceProcessEvents | where Timestamp > ago(24h) | summarize CallCount = count(), UniqueAPIs = dcount(ApiCall) by DeviceName, InitiatingProcessFileName, InitiatingProcessCommandLine, InitiatingProcessId, FolderPath, SHA256 | where CallCount > 500 // Threshold for high activity - adjust based on environment baseline | where UniqueAPIs > 100 // Threshold for diverse API usage - adjust based on environment baseline | project DeviceName, InitiatingProcessFileName, InitiatingProcessCommandLine, InitiatingProcessId, FolderPath, SHA256, CallCount, UniqueAPIs | join kind=inner ( DeviceProcessEvents | where Timestamp > ago(24h) | summarize APISequence = make_list(ApiCall) by DeviceName, InitiatingProcessFileName, InitiatingProcessCommandLine, InitiatingProcessId ) on DeviceName, InitiatingProcessFileName, InitiatingProcessCommandLine, InitiatingProcessId | extend APISequenceLength = array_length(APISequence) | where APISequenceLength > 100 // Ensure a substantial sequence for analysis // Further analysis could involve comparing API sequences against known benign patterns or using ML models // to detect deviations indicative of malicious intent or evasion. | project DeviceName, InitiatingProcessFileName, InitiatingProcessCommandLine, FolderPath, SHA256, CallCount, UniqueAPIs, APISequenceLength // Add thresholds or ML model scoring for alert generation | where CallCount > 1000 and UniqueAPIs > 200 // Example of stricter thresholds for alerting | where InitiatingProcessFileName !in ("svchost.exe", "explorer.exe", "powershell.exe") // Filter out common system processes unless specific behavior is suspicious // Consider adding logic to score based on process lineage and command line arguments for more precise detection. - Sigma Rule for Suspicious Process Injection (Enhanced Correlation):

title: Suspicious Process Injection Attempt (Memory Write + Remote Thread) id: 12345678-abcd-efgh-ijkl-mnopqrstuvwx # Example UUID status: experimental description: Detects processes attempting to write to the memory of another process and subsequently create a remote thread, a common technique used by malware and advanced attackers for code injection. This rule correlates Sysmon EventID 8 (ProcessAccess) with EventID 10 (ProcessCreate, often indicative of thread creation or process start). author: Your Name/Team date: 2026/03/31 references: - https://thehackernews.com/2026/03/the-ai-arms-race-why-unified-exposure.html # General context - https://learn.microsoft.com/en-us/sysinternals/troubleshooting/sysmon-event-id-reference # Sysmon documentation logsource: product: windows service: sysmon detection: # EventID 8: ProcessAccess - Detects when a process attempts to access another process. # GrantedAccess flags indicate the type of access. We look for memory write and thread creation permissions. selection_mem_write_thread_access: EventID: 8 GrantedAccess|contains: '0x1010' # PROCESS_VM_WRITE | PROCESS_CREATE_THREAD # EventID 10: ProcessCreate - Detects process creation. In some contexts, this can indicate thread creation # or be correlated with EventID 8 to confirm injection. # A more direct approach for thread creation is EventID 2 (ProcessCreate) combined with specific process start types. # However, EventID 8 is the primary indicator for the *attempt* to write memory and create a thread. # For simplicity and common detection patterns, we focus on EventID 8's GrantedAccess. # A more advanced rule might correlate EventID 8 with EventID 10 or other thread-related events. filter_legit_processes: # Add known legitimate processes that perform injection (e.g., debuggers, security tools, certain system processes). # This list needs careful tuning based on the environment. TargetImage|notin: - 'C:\\Windows\\System32\\svchost.exe' # Example: Legitimate system process - 'C:\\Windows\\System32\\lsass.exe' # Example: Legitimate system process - 'C:\\Program Files\\SomeDebugger\\debugger.exe' # Example: Third-party debugger - 'C:\\Program Files\\SomeSecurityTool\\agent.exe' # Example: Security agent condition: selection_mem_write_thread_access and not filter_legit_processes falsepositives: - Legitimate debugging or security tools. - Certain system processes performing legitimate memory manipulation. level: high

- KQL Query for Anomalous Process Execution and API Call Sequences (Enhanced):

- Behavioral Indicators:

- Rapid Lateral Movement: Unexplained, high-speed propagation across network segments, potentially using automated exploit chains against discovered vulnerabilities.

- Anomalous Data Access: Processes accessing sensitive data repositories they do not normally interact with, especially if correlated with unusual network egress.

- Evasion of Security Controls: Observed attempts to disable or tamper with EDR, AV, firewalls, or logging mechanisms, often through system API calls or manipulation of configuration settings.

- Unusual Network Connections: Outbound connections to unknown or suspicious IP addresses/domains, especially those associated with AI model infrastructure, command and control servers, or large-scale data exfiltration endpoints.

- Dynamic Code Execution: Detection of processes that dynamically generate or modify their code in memory, often observed via memory analysis or specific EDR telemetry.

- Mimicry of Benign Behavior: AI-driven malware may execute actions that closely resemble legitimate user or system activity, making anomaly detection challenging without sophisticated baselining and behavioral modeling.

5. Mitigation & Remediation (Blue-Team Focus)

- Official Patch Information: Not applicable. The article describes a trend, not a specific vulnerability requiring a patch.

- Workarounds & Temporary Fixes:

- Enhanced Network Segmentation: Implement granular network segmentation (e.g., using VLANs, micro-segmentation, cloud security groups) to limit the blast radius of automated attacks and prevent rapid lateral movement.

- Strict Access Controls (Least Privilege): Enforce the principle of least privilege for all users, service accounts, and applications. This minimizes the impact of credential compromise or initial access. Implement multi-factor authentication (MFA) universally.

- Web Application Firewalls (WAFs) & Intrusion Prevention Systems (IPS): Deploy and tune WAFs and IPS to block known malicious patterns and anomalous traffic. However, be aware that AI-generated evasive techniques may bypass static rule sets. Consider WAFs with AI/ML capabilities for anomaly detection.

- Disable Unnecessary Services/Protocols: Reduce the attack surface by disabling any services, ports, or protocols not strictly required for business operations. Regularly audit open ports and running services.

- Implement Robust Endpoint Security: Deploy advanced Endpoint Detection and Response (EDR) solutions with strong behavioral analysis, anomaly detection, and AI/ML capabilities. Ensure these solutions are configured to detect polymorphic code and advanced evasion techniques.

- User Awareness Training: Conduct continuous and advanced training on recognizing sophisticated phishing and social engineering tactics. Simulate AI-generated lures in controlled exercises.

- Application Whitelisting: Implement application whitelisting to restrict the execution of unauthorized applications and scripts, especially those that might be used for malicious purposes.

- Regular Vulnerability Scanning & Patch Management: While AI accelerates exploitation, maintaining a rigorous patch management program for known vulnerabilities remains a critical first line of defense. Prioritize patching based on exploitability and impact.

- Manual Remediation Steps (Non-Automated): Not applicable for a general trend. Remediation is environment-specific and depends on the exploited vulnerability or observed malicious activity. For instance, if an AI-driven exploit against a specific web application is detected, remediation would involve patching the application, isolating the affected server, and analyzing logs for indicators of compromise.

- Risk Assessment During Remediation: The primary risk during the remediation window is the continued exposure to AI-driven attacks. The speed and automation of these threats mean that the window of opportunity for adversaries is significantly reduced, making manual, slow remediation processes highly risky. The risk of incomplete or incorrect remediation also increases under pressure, potentially leaving systems vulnerable or introducing new issues. Continuous monitoring and rapid response capabilities are paramount.

6. Supply-Chain & Environment-Specific Impact

- CI/CD Impact: AI can be used to inject malicious code into build pipelines, compromise artifact repositories (e.g., npm, Docker Hub, PyPI), or exploit vulnerabilities in CI/CD tools themselves. Adversaries could use AI to identify vulnerabilities in build tools or dependencies, leading to widespread compromise of deployed software. This could involve AI-powered fuzzing of build scripts or dependency analysis to find exploitable flaws.

- Container/Kubernetes Impact: AI can be used to identify misconfigurations in Kubernetes clusters or Docker environments (e.g., overly permissive RBAC roles, exposed Kube API server, insecure container images). Exploits targeting container runtime vulnerabilities or orchestration plane weaknesses could be automated. Container isolation effectiveness is challenged if AI can identify and exploit kernel vulnerabilities, misconfigurations in the Container Network Interface (CNI) or Container Storage Interface (CSI) layers, or weaknesses in the container runtime daemon itself.

- Supply-Chain Implications: This is a significant concern. AI can accelerate the discovery of vulnerabilities in software dependencies. Adversaries can use AI to analyze dependency graphs and identify critical libraries or components whose compromise would have a cascading effect across many downstream applications. The development of AI-powered tools to automatically find and exploit vulnerabilities in open-source libraries is a plausible future threat, making dependency management and software bill of materials (SBOM) analysis even more critical.

7. Advanced Technical Analysis

- Exploitation Workflow (Detailed):

- AI Agent Reconnaissance & Target Profiling: An AI agent scans the target network (internal or external), enumerates assets, identifies services, and queries vulnerability databases. It might use techniques like AI-driven fuzzing with context-aware inputs or analyzing network traffic patterns for anomalies to identify potential weaknesses. This phase can also involve OSINT gathering for social engineering.

- Vulnerability Graph Construction & Attack Path Optimization: The AI agent constructs a directed acyclic graph (DAG) representing potential attack paths by linking identified vulnerabilities, misconfigurations, and access points. AI algorithms then select the most efficient, stealthy, and highest-impact exploit path from this graph.

- Phishing Lure Generation (if needed): LLMs generate highly personalized phishing emails, SMS messages, or social media interactions, leveraging information gathered during reconnaissance (e.g., employee names, roles, recent projects, company news). The AI can dynamically adjust the tone and content for maximum psychological impact.

- Exploit Delivery & Execution: The chosen exploit is delivered. This could be via a crafted network packet targeting a vulnerable service, a malicious file delivered via email or download, or a compromised web service. The payload is designed to be polymorphic, potentially using techniques like code obfuscation, encryption, and dynamic assembly.

- Evasion & Persistence: The polymorphic payload dynamically alters its signature and behavior to evade detection by endpoint security solutions (EDR, AV) and network intrusion detection systems (IDS). AI assists in establishing stealthy persistence mechanisms, such as scheduled tasks, WMI event subscriptions, registry run keys, or rootkits, by analyzing the target OS for least-suspicious methods.

- Lateral Movement & Objective Achievement: AI agents analyze the compromised system for high-value targets (e.g., domain controllers, sensitive databases, credential stores) and automate lateral movement. This might involve AI agents that learn network topology, analyze user behavior to identify optimal paths, or exploit privilege escalation vulnerabilities. Data exfiltration is then orchestrated, with AI optimizing routes and data selection for stealth.

- Code-Level Weakness: The article does not specify code-level weaknesses. However, the application of AI to automate the discovery and exploitation of existing weaknesses (e.g., buffer overflows, heap overflows, use-after-free, SQL injection, command injection, insecure deserialization, race conditions, logic flaws) is the primary driver of accelerated threat velocity. AI can automate the fuzzing process to discover these weaknesses and generate precise exploit payloads tailored to specific memory layouts or protocol implementations.

- Related CVEs & Chaining: Not applicable. The article discusses a trend, not specific CVEs. However, it implies that AI can accelerate the discovery and chaining of existing CVEs or zero-day vulnerabilities.

- Bypass Techniques:

- Signature Evasion: Polymorphic malware generated by AI dynamically changes its code, encryption keys, and obfuscation techniques, making traditional signature-based detection ineffective.

- Behavioral Evasion: AI can learn to mimic benign process behaviors, making anomaly detection more challenging. This might involve carefully orchestrating API calls, timing operations to align with normal system activity, or using legitimate tools for malicious purposes (Living Off The Land - LOTL) in a highly sophisticated manner. AI can analyze EDR heuristics and adapt its execution to avoid triggering alerts.

- Environment-Specific Evasion: AI can be used to analyze specific security controls (e.g., WAF rulesets, IDS signatures, EDR behavioral heuristics, sandbox environments) and generate payloads or attack vectors that are specifically designed to bypass them. For example, an AI might identify weaknesses in a WAF's regex engine or a specific EDR's detection logic and craft input or execution patterns that circumvent it.

- Credential Stuffing/Phishing: AI-powered social engineering significantly increases the success rate of credential harvesting by creating highly personalized and contextually relevant lures, bypassing technical controls by exploiting human trust and cognitive biases.

8. Practical Lab Testing

- Safe Testing Environment Requirements:

- Isolated Network: A completely air-gapped or heavily segmented network environment, with strict firewall rules and no internet connectivity for the test systems.

- Virtual Machines (VMs): Multiple VMs representing different operating system versions, patch levels, and configurations relevant to the target environment.

- Containerized Environment: Docker and Kubernetes clusters for testing container-specific impacts, including orchestration plane security and container isolation.

- Network Taps/Packet Capture: Tools like Wireshark, tcpdump, or dedicated network analysis appliances to monitor and capture all network traffic for detailed analysis.

- Endpoint Telemetry: Sysmon, EDR agents, and centralized logging (SIEM) to capture detailed process, file, registry, and network activity on test endpoints.

- Malware Analysis Sandbox: Tools like Cuckoo Sandbox, Any.run, or custom-built sandboxes for dynamic analysis of potential AI-generated malware samples.

- Vulnerability Scanners & Reconnaissance Tools: Nmap, Masscan, Nessus, OpenVAS, and custom scripts to simulate AI-driven scanning and vulnerability discovery.

- How to Safely Test:

- Deploy Test VMs/Containers: Set up representative target environments with known vulnerable configurations or software versions.

- Install Defensive Tools: Deploy EDR, SIEM agents, and network monitoring tools to the test environment.

- Simulate Reconnaissance: Use reconnaissance tools to simulate AI-driven scanning. Monitor network traffic for unusual patterns, port scans, and service enumeration. Analyze logs for suspicious queries.

- Test Phishing Lures: Create AI-generated phishing templates (using safe, non-malicious content for testing purposes) and test user response in a controlled setting. Observe if the lures are convincing enough to elicit clicks or credential input.

- Deploy Simulated Polymorphic Malware: If specific AI-generated malware samples or exploit techniques are identified, analyze their behavior in a sandbox first. Then, attempt to deploy them in the isolated lab environment. Observe EDR/AV detection rates, behavioral anomalies, and network communication.

- Test Exploit Chains: If specific AI-driven exploit chains are identified (e.g., a chain involving a web vulnerability followed by privilege escalation), attempt to replicate them in the lab against vulnerable systems. Document each step and the observed outcomes.

- Monitor Detection & Response: Verify that SIEM rules, EDR alerts, and incident response playbooks trigger as expected for simulated malicious activities. Measure detection and response times.

- Test Metrics:

- Detection Rate: Percentage of simulated attacks/malware detected by security controls (e.g., EDR, IDS, WAF).

- Time to Detect (TTD): Average time from simulated attack initiation to alert generation by security systems.

- Time to Respond (TTR): Average time from alert generation to containment or remediation of the simulated threat.

- False Positive Rate: Number of legitimate activities incorrectly flagged as malicious by detection systems.

- Evasion Success Rate: Percentage of simulated attacks or malware samples that successfully bypassed detection mechanisms.

- Attack Path Coverage: The percentage of identified potential attack paths that were successfully exploited in the lab.

9. Geopolitical & Attribution Context

- Is there evidence of state-sponsored involvement? The article mentions "nation-states" as potential actors, but provides no specific evidence or attribution for current AI-driven attack trends. However, the advanced capabilities described (AI-driven automation, polymorphic malware, sophisticated social engineering) are highly valuable for nation-state espionage, cyber warfare, and disruptive attacks.

- Targeted Sectors: Not publicly disclosed in the article. However, AI-driven attacks could target any sector, with critical infrastructure, government agencies, defense contractors, and high-value corporations being prime targets due to their strategic importance or the sensitive data they hold.

- Attribution Confidence: Currently unconfirmed. The article describes a general trend rather than specific incidents tied to known actors. Attribution would require detailed forensic analysis of specific attack campaigns.

- Campaign Context: Not applicable.

- If unknown: Attribution currently unconfirmed. The described capabilities are within the reach of sophisticated state-sponsored groups and advanced persistent threats (APTs). The use of AI could represent an evolution in their operational capabilities.