Information technology management (Wikipedia Lab Guide)

Advanced Study Guide: Information Technology Management

1) Introduction and Scope

Information Technology Management (ITM) is the discipline of strategically and operationally overseeing an organization's information technology assets and services. This encompasses the entire lifecycle of IT resources, from acquisition and deployment to operation, maintenance, and eventual decommissioning, with the overarching goal of enabling and optimizing business objectives. ITM is not merely the administration of technology but rather the integration of technical capabilities with business strategy, financial stewardship, risk mitigation, and human capital development. This study guide aims to provide a technically rigorous exploration of ITM, moving beyond high-level concepts to delve into the internal mechanics, architectural nuances, and practical considerations essential for technical professionals and aspiring IT leaders.

The scope of ITM is broad and deeply technical, including:

- Strategic Technology Alignment: Ensuring IT investments, architectures, and roadmaps directly support and enable current and future business capabilities. This involves understanding the technical feasibility and scalability of proposed solutions against business requirements.

- Operational Excellence and Resilience: Maintaining the availability, performance, integrity, and security of IT services and infrastructure. This requires deep knowledge of system monitoring, fault tolerance, disaster recovery, and performance tuning.

- Financial Governance and Cost Optimization: Managing IT budgets, capital expenditures (CapEx), operational expenditures (OpEx), procurement processes, and optimizing resource utilization to maximize return on investment (ROI). This involves understanding the total cost of ownership (TCO) for various technologies.

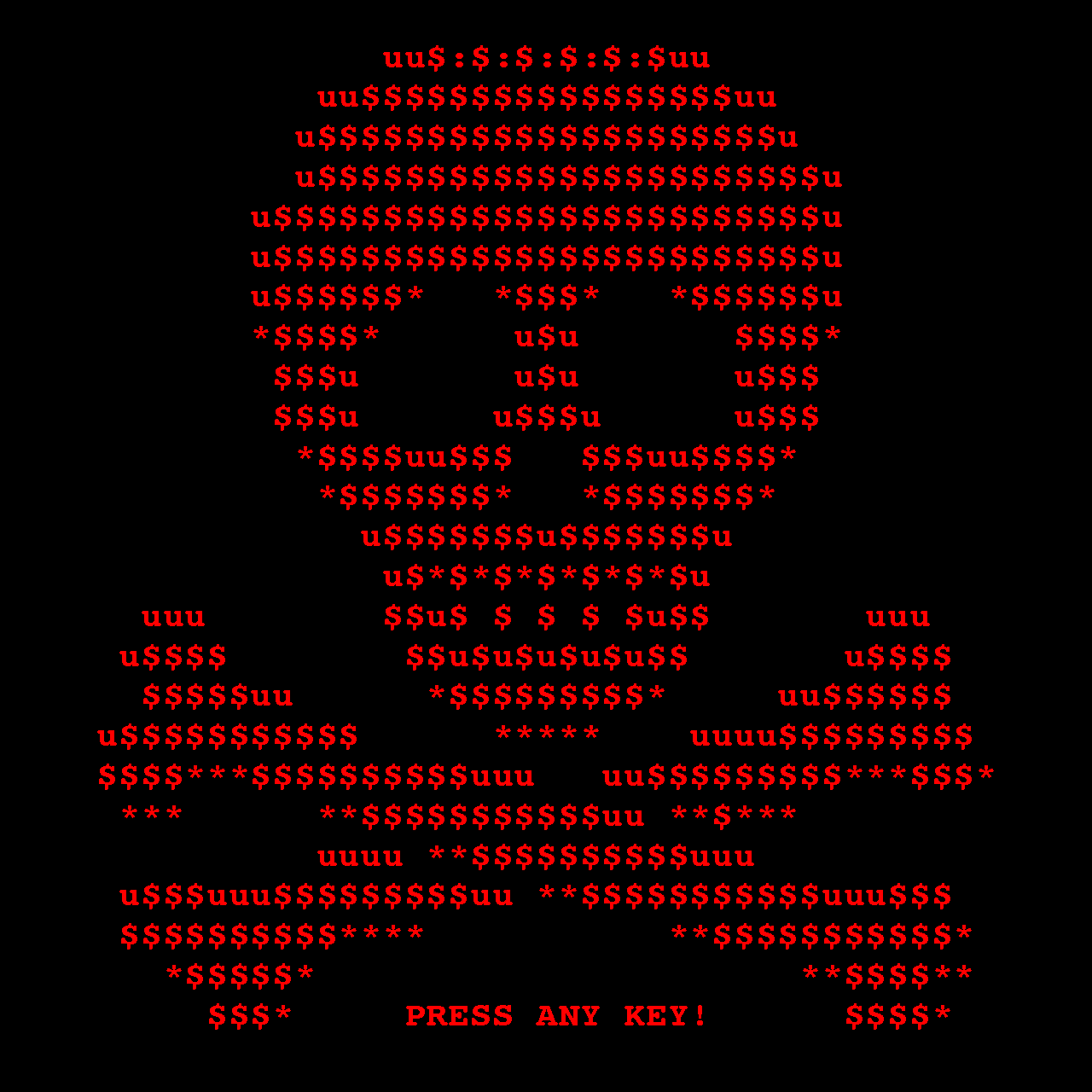

- Risk Management and Security Posture: Identifying, assessing, and mitigating IT-related threats, vulnerabilities, and compliance requirements. This necessitates a strong grasp of cybersecurity principles, cryptographic protocols, network security architectures, and incident response.

- Service Delivery and Lifecycle Management: Designing, implementing, supporting, and evolving IT services to meet defined service level objectives (SLOs) and user needs. This involves understanding service catalogs, service level agreements (SLAs), and the underlying technical stacks.

- Infrastructure Architecture and Management: Overseeing the design, deployment, and operation of hardware (servers, storage, networking), software (operating systems, middleware, applications), and data centers, including physical and virtualized environments.

ITM is distinct from Management Information Systems (MIS), which typically focuses on the application of systems to support human decision-making. ITM, conversely, is concerned with the management of the IT resources themselves, encompassing their technical architecture, operational characteristics, and strategic contribution to the enterprise.

2) Deep Technical Foundations

Effective ITM hinges on a profound understanding of core technical domains that underpin modern IT infrastructure and services. This section explores foundational concepts critical for informed technical decision-making, architectural design, and strategic planning.

2.1) Network Architecture and Protocols

Mastery of network protocols and architectures is fundamental for managing connectivity, ensuring data integrity, optimizing performance, and implementing robust security.

- TCP/IP Suite Deep Dive: The Transmission Control Protocol/Internet Protocol suite is the de facto standard for modern networking.

- IP Addressing and Subnetting:

- IPv4: Uses a 32-bit address space (e.g.,

192.168.1.100). Classless Inter-Domain Routing (CIDR) notation (e.g.,192.168.1.0/24) is standard for representing network prefixes. A/24prefix implies a subnet mask of255.255.255.0, yielding 256 addresses, with 2 reserved (network and broadcast). - IPv6: Employs a 128-bit address space (e.g.,

2001:0db8:85a3:0000:0000:8a2e:0370:7334). Leading zeros and consecutive blocks of zeros can be compressed (e.g.,2001:db8:85a3::8a2e:370:7334). Subnetting is typically done in/64blocks for hosts. - Subnetting Rationale: Network segmentation improves security by isolating broadcast domains, reduces network congestion, and allows for more efficient IP address allocation. For example, segmenting a corporate network into

/24subnets for each department (e.g.,10.10.10.0/24for Engineering,10.10.20.0/24for Marketing) enhances security by preventing direct traffic flow between departments without passing through a firewall.

- IPv4: Uses a 32-bit address space (e.g.,

- TCP (Transmission Control Protocol): A connection-oriented, reliable transport protocol.

- Three-Way Handshake: Establishes a stateful connection.

Client -> SYN (Seq=X, Win=W) -> Server Server -> SYN-ACK (Seq=Y, Ack=X+1, Win=W') -> Client Client -> ACK (Ack=Y+1) -> ServerSYN: Synchronize sequence numbers.ACK: Acknowledgment.Seq: Sequence number.Win: Window size, indicating buffer space for flow control.

- Flow Control: Prevents a fast sender from overwhelming a slow receiver using the TCP window mechanism. The receiver advertises its available buffer space (

Win). A receiver with limited buffer space will advertise a smaller window, forcing the sender to slow down. - Congestion Control: Algorithms like Tahoe, Reno, Cubic adjust sending rates based on perceived network congestion (packet loss, Round Trip Time - RTT). These algorithms typically implement Slow Start, Congestion Avoidance, Fast Retransmit, and Fast Recovery phases. For instance, during Slow Start, the congestion window (cwnd) doubles with each Round Trip Time (RTT) until a threshold is reached, after which Congestion Avoidance linearly increases the cwnd.

- Retransmission: Guarantees delivery by retransmitting lost segments based on timeouts (RTO - Retransmission Timeout) or duplicate ACKs (Fast Retransmit). A timeout triggers a retransmission after a calculated RTO, which is dynamically adjusted based on observed RTT. Three duplicate ACKs signal a likely lost segment, triggering Fast Retransmit without waiting for a timeout.

- Three-Way Handshake: Establishes a stateful connection.

- UDP (User Datagram Protocol): A connectionless, best-effort transport protocol.

- Header: Minimal (8 bytes): Source Port (16 bits), Destination Port (16 bits), Length (16 bits), Checksum (16 bits).

- Use Cases: DNS (Port 53), DHCP (Ports 67/68), VoIP, streaming media where low latency is critical and occasional packet loss is acceptable. For example, a VoIP call might use UDP; if a packet is lost, a slight audio glitch occurs, which is often preferable to the delay introduced by TCP retransmissions.

- Ports: TCP and UDP ports (0-65535) are multiplexing identifiers.

- Well-Known Ports (0-1023): HTTP (80), HTTPS (443), SSH (22), FTP (21), SMTP (25).

- Registered Ports (1024-49151): Assigned by IANA for specific applications.

- Dynamic/Private Ports (49152-65535): Used for ephemeral client-side connections. When a client initiates a connection, it typically uses a dynamic port from this range.

- IP Addressing and Subnetting:

- Routing Protocols: Mechanisms for routers to exchange network reachability information.

- Interior Gateway Protocols (IGPs): Operate within a single Autonomous System (AS).

- OSPF (Open Shortest Path First): A link-state routing protocol. Routers flood Link State Advertisements (LSAs) to build a complete topological map of the network. Dijkstra's algorithm is used to calculate the shortest path tree. Routers maintain an LSDB (Link State Database). For example, an LSA might describe the state of a router's directly connected links:

Router ID: 192.168.1.1, Link State Type: Router, Links: {Type: Point-to-Point, Neighbor ID: 192.168.1.2, Cost: 1}. - EIGRP (Enhanced Interior Gateway Routing Protocol): A hybrid protocol (advanced distance-vector) developed by Cisco. Uses the Diffusing Update Algorithm (DUAL) for rapid convergence and supports features like unequal cost load balancing. DUAL maintains a topology table of learned routes and their successors, enabling fast failover.

- OSPF (Open Shortest Path First): A link-state routing protocol. Routers flood Link State Advertisements (LSAs) to build a complete topological map of the network. Dijkstra's algorithm is used to calculate the shortest path tree. Routers maintain an LSDB (Link State Database). For example, an LSA might describe the state of a router's directly connected links:

- Exterior Gateway Protocols (EGPs): Operate between different ASes.

- BGP (Border Gateway Protocol): The routing protocol of the Internet. It's a path-vector protocol that exchanges reachability information based on AS paths, policies, and attributes (e.g., MED - Multi-Exit Discriminator, Local Preference, AS_PATH). BGP is designed for scalability and policy enforcement. A BGP update might look like:

Network: 198.51.100.0/24, AS_PATH: 64512 64513, NEXT_HOP: 192.0.2.1, LOCAL_PREF: 100, MED: 50.

- BGP (Border Gateway Protocol): The routing protocol of the Internet. It's a path-vector protocol that exchanges reachability information based on AS paths, policies, and attributes (e.g., MED - Multi-Exit Discriminator, Local Preference, AS_PATH). BGP is designed for scalability and policy enforcement. A BGP update might look like:

- Interior Gateway Protocols (IGPs): Operate within a single Autonomous System (AS).

- Network Services: Essential infrastructure services.

- DNS (Domain Name System): A hierarchical, distributed database for translating domain names into IP addresses.

- Resolution Process: Recursive resolvers query authoritative servers. The process involves iterative queries from root servers down to TLD servers, then authoritative name servers. A client requests

www.example.com. The local resolver queries a root server, which directs it to the.comTLD server. The TLD server directs it toexample.com's authoritative name server, which returns the IP address. - Record Types: A (IPv4), AAAA (IPv6), CNAME (Canonical Name), MX (Mail Exchanger), NS (Name Server), SOA (Start of Authority), SRV (Service Locator).

- DNSSEC (DNS Security Extensions): Provides authentication and integrity for DNS data using digital signatures (e.g., RSA, ECDSA) and hash chains (e.g., SHA-256). A DNSKEY record contains the public key used to verify RRSIG (Resource Record Signature) records.

- Resolution Process: Recursive resolvers query authoritative servers. The process involves iterative queries from root servers down to TLD servers, then authoritative name servers. A client requests

- DHCP (Dynamic Host Configuration Protocol): Automates IP address assignment and network configuration.

- DORA Process: Discover (client broadcasts DHCPDISCOVER), Offer (server offers IP, lease time, options via DHCP OFFER), Request (client requests offered IP via DHCPREQUEST), Acknowledge (server confirms lease via DHCPACK).

- DHCP Options: Gateway, DNS servers, domain name, lease time, TFTP server and boot file name (for PXE boot).

- DNS (Domain Name System): A hierarchical, distributed database for translating domain names into IP addresses.

2.2) Operating System Internals

A deep understanding of OS kernels, process/thread management, memory management, and file systems is crucial for performance tuning, troubleshooting, security hardening, and capacity planning.

- Process and Thread Management:

- Process States: New, Ready, Running, Waiting (Blocked), Terminated.

- Scheduling Algorithms:

- Round-Robin: Each process gets a fixed time slice (quantum). Fair but can lead to high context switching overhead. A quantum of 10ms means a process will run for at most 10ms before being preempted.

- Priority-Based: Processes with higher priority are executed first. Can lead to starvation of low-priority processes. Preemptive vs. non-preemptive scheduling. A real-time process might have a higher priority than a batch processing job.

- Multilevel Feedback Queues: Combines aspects of other algorithms, using multiple queues with different priorities and time slices. Processes can move between queues based on their behavior. A CPU-bound process might start in a high-priority queue with a short quantum, and if it consistently uses its quantum, it moves to a lower-priority queue with a longer quantum. I/O-bound processes might stay in high-priority queues.

- Context Switching: The mechanism by which the CPU switches from executing one process/thread to another. This involves saving the CPU state (registers, program counter, stack pointer, page table base register) of the current task and loading the state of the next task.

- Overhead: Context switching is an expensive operation, consuming CPU cycles that could otherwise be used for application execution. Frequent context switching can indicate a system under heavy load or poor scheduling. High "context switches/sec" in

vmstatorsaroutput is a key indicator. - Kernel Mode vs. User Mode: Context switches can occur between user mode and kernel mode (e.g., system calls) or between processes/threads within the same mode. A system call like

read()from a user-space application to the kernel requires a mode switch and potentially a process/thread context switch if the kernel needs to schedule another task while waiting for I/O.

- Overhead: Context switching is an expensive operation, consuming CPU cycles that could otherwise be used for application execution. Frequent context switching can indicate a system under heavy load or poor scheduling. High "context switches/sec" in

- Memory Management: Techniques for efficiently allocating and managing system memory.

- Virtual Memory: An abstraction that provides each process with its own private address space, larger than physical RAM.

- Paging: Divides memory into fixed-size blocks called pages (typically 4KB, 8KB, or larger). The MMU (Memory Management Unit) translates virtual addresses to physical addresses using page tables. Page faults occur when a requested page is not in RAM. A virtual address

0x1000might map to physical address0x5000via the page table. If the page containing0x1000is not in physical RAM, a page fault is generated. - Segmentation: Divides memory into variable-size logical segments. Less common in modern OSes for general memory management but used for code, data, and stack segments.

- Swapping/Paging Out: When physical memory is exhausted, less-used pages are written to a backing store (swap partition or page file) on disk. This allows the system to run programs larger than physical RAM.

- Page Faults: Occur when a process tries to access a page not currently in physical memory. The OS must retrieve it from disk, incurring significant latency. Frequent page faults (high page fault rate) indicate memory pressure. Tools like

perforvmstatcan show page fault statistics.

- Paging: Divides memory into fixed-size blocks called pages (typically 4KB, 8KB, or larger). The MMU (Memory Management Unit) translates virtual addresses to physical addresses using page tables. Page faults occur when a requested page is not in RAM. A virtual address

- Memory Allocation:

- Stack: Used for function call frames, local variables, and return addresses. Grows downwards in memory. LIFO (Last-In, First-Out).

pushandpopoperations. Stack overflow occurs when the stack grows too large. A recursive function without a proper base case can lead to a stack overflow. - Heap: Used for dynamic memory allocation (

malloc,calloc,realloc,freein C/C++). Managed by a heap allocator (e.g.,ptmalloc,jemalloc,tcmalloc). Can suffer from fragmentation (external and internal).- External Fragmentation: Free memory is broken into small, non-contiguous blocks, preventing larger allocations. If a system has 100MB free but it's split into 100 blocks of 1MB, a request for 2MB cannot be satisfied.

- Internal Fragmentation: Allocated blocks are larger than requested, wasting space within the block. If an application requests 10 bytes but the allocator provides a 16-byte block, 6 bytes are internally fragmented.

- Stack: Used for function call frames, local variables, and return addresses. Grows downwards in memory. LIFO (Last-In, First-Out).

- Virtual Memory: An abstraction that provides each process with its own private address space, larger than physical RAM.

- File Systems: Structures and algorithms for organizing and accessing data on storage devices.

- Inodes (Unix/Linux): Data structures that store metadata about files and directories: permissions, ownership, timestamps, size, and pointers to data blocks. Each file/directory has a unique inode number. The inode table is stored on disk. An

ls -licommand shows inode numbers. - NTFS (Windows): Uses the Master File Table (MFT) as a database to store metadata and data for all files and directories.

- Journaling: A technique to ensure file system consistency. Before making changes to the main file system structure, the intended operations are logged in a journal. If a crash occurs, the journal can be replayed to recover to a consistent state. Examples: ext4, XFS, APFS, NTFS. This significantly reduces file system check times after an unclean shutdown. A

fsck(file system check) on a non-journaled filesystem after a crash can take hours, while a journaled filesystem might recover in minutes. - File System Performance: Factors include block size, inode density, fragmentation, caching (page cache), and the underlying storage medium (HDD vs. SSD). SSDs offer significantly lower latency for random I/O operations. A larger block size can improve sequential read/write performance but increase internal fragmentation for small files.

- Inodes (Unix/Linux): Data structures that store metadata about files and directories: permissions, ownership, timestamps, size, and pointers to data blocks. Each file/directory has a unique inode number. The inode table is stored on disk. An

2.3) Security Principles and Cryptography

Robust ITM requires the proactive integration of security principles and cryptographic techniques to protect data and systems.

- Authentication, Authorization, and Accounting (AAA):

- Authentication: Verifying the identity of a user, process, or device.

- Factors: Something you know (password, PIN), something you have (token, smart card, mobile device), something you are (biometrics - fingerprint, facial scan).

- Protocols: Kerberos (ticket-based authentication), RADIUS (Remote Authentication Dial-In User Service), TACACS+ (Terminal Access Controller Access-Control System Plus), OAuth (Open Authorization), SAML (Security Assertion Markup Language). Kerberos uses a trusted third party (Key Distribution Center) to issue tickets, preventing password transmission over the network.

- MFA (Multi-Factor Authentication): Combines two or more distinct factors to increase security. A common example is a password (something you know) combined with a one-time code from a mobile app (something you have).

- Authorization: Determining what an authenticated entity is permitted to do.

- Access Control Models:

- DAC (Discretionary Access Control): Owner of the resource controls access (e.g., file permissions in Unix:

rwxr-xr-xfor owner, group, others). - MAC (Mandatory Access Control): System-wide security policy dictates access, often based on security labels (e.g., SELinux, AppArmor). A process might have a security context (e.g.,

unconfined_t), and a file might have a security context (e.g.,etc_t). SELinux policy defines whetherunconfined_tcan accessetc_t. - RBAC (Role-Based Access Control): Permissions are assigned to roles, and users are assigned to roles. Simplifies management by grouping users with similar access needs. For instance, a "Database Administrator" role might have

SELECT,INSERT,UPDATE,DELETEpermissions on all tables in a database, and specific users are assigned to this role.

- DAC (Discretionary Access Control): Owner of the resource controls access (e.g., file permissions in Unix:

- Access Control Models:

- Accounting: Recording system events and user actions for auditing, billing, and security analysis.

- Logs: System logs (

syslog, Event Viewer), application logs, security event logs (e.g., Windows Security Auditing, Linux auditd). A successful login attempt might generate an event ID 4624 in Windows Event Viewer, including the username, login type, and source IP address.

- Logs: System logs (

- Authentication: Verifying the identity of a user, process, or device.

- Cryptography:

- Symmetric Encryption: Uses a single secret key for both encryption and decryption.

- Algorithms: AES (Advanced Encryption Standard) is the current standard, typically using 128, 192, or 256-bit keys. Block ciphers operate on fixed-size blocks of data (e.g., 128 bits for AES).

- Modes of Operation: ECB (Electronic Codebook - insecure due to pattern repetition), CBC (Cipher Block Chaining - requires an Initialization Vector, IV), GCM (Galois/Counter Mode - provides authenticated encryption with associated data - AEAD, highly recommended). In CBC mode, each plaintext block is XORed with the previous ciphertext block before encryption. This ensures that identical plaintext blocks produce different ciphertext blocks.

- Key Distribution: The primary challenge. Requires a secure channel or protocol (e.g., Diffie-Hellman key exchange, key wrapping) to exchange keys.

- Asymmetric (Public-Key) Encryption: Uses a pair of keys: a public key for encryption and a private key for decryption.

- Algorithms: RSA (Rivest–Shamir–Adleman), ECC (Elliptic Curve Cryptography - more efficient for equivalent security levels).

- Use Cases: Secure key exchange (e.g., TLS handshake), digital signatures (verifying authenticity and integrity). In TLS, the client uses the server's public key to encrypt a symmetric session key, which only the server can decrypt with its private key.

- Public Key Example (PEM format):

-----BEGIN PUBLIC KEY----- MIIBIjANBgkqhkiG9w0BAQEFAAOCAQ8AMIIBCgKCAQEA... -----END PUBLIC KEY----- - Private Key Example (PEM format):

(Must be kept secret and protected with strong passphrases or hardware security modules).-----BEGIN PRIVATE KEY----- MIIEvQIBADANBgkqhkiG9w0BAQEFAASCBKcwggSjAgEAAoIBAQ... -----END PRIVATE KEY-----

- Hashing: One-way function that maps data of arbitrary size to a fixed-size output (hash digest).

- Properties: Deterministic, collision-resistant (hard to find two inputs producing the same hash), pre-image resistant (hard to find input given hash), second pre-image resistant (hard to find a different input with the same hash as a given input).

- Algorithms: SHA-256, SHA-3. MD5 and SHA-1 are considered cryptographically broken and should not be used for security purposes. For example,

SHA256("password")will always produce the same 256-bit hash. - Use Cases: Password storage (hashing with salt), data integrity checks, digital signatures. A salt is a random value added to a password before hashing, making rainbow table attacks more difficult.

- Collision Example: If

hash(A) == hash(B)andA != B, a collision has occurred. This can be exploited in certain attacks, such as forging digital signatures.

- Symmetric Encryption: Uses a single secret key for both encryption and decryption.

- Network Security:

- Firewalls: Network security devices that monitor and control incoming and outgoing network traffic based on predetermined security rules.

- Types: Packet filtering (stateless), stateful inspection (tracks connection state), proxy firewalls (application-layer gateway), next-generation firewalls (NGFW) with application awareness, intrusion prevention (IPS), and deep packet inspection (DPI). A stateful firewall would allow return traffic for an established outbound HTTP connection without needing an explicit inbound rule for that specific connection.

- IDS/IPS (Intrusion Detection/Prevention Systems): Monitor network or system activities for malicious activities or policy violations.

- Detection Methods: Signature-based (matches known attack patterns), anomaly-based (detects deviations from normal behavior), heuristic (rule-based analysis). A signature-based IDS might detect an attempted SQL injection by matching a known malicious SQL query pattern in network traffic.

- VPNs (Virtual Private Networks): Create encrypted tunnels over public networks to provide secure remote access or site-to-site connectivity.

- Protocols: IPsec (Internet Protocol Security - provides authentication, integrity, and confidentiality at the IP layer), SSL/TLS VPNs (often used for remote access, leveraging the TLS protocol). IPsec can operate in tunnel mode (encapsulating entire IP packets) or transport mode (encrypting only the payload).

- Firewalls: Network security devices that monitor and control incoming and outgoing network traffic based on predetermined security rules.

3) Internal Mechanics / Architecture Details

This section examines the architectural components and operational mechanisms that IT managers must understand to effectively design, deploy, and manage IT infrastructure.

3.1) Infrastructure Layers and Interdependencies

IT infrastructure is typically conceptualized in a layered model, highlighting the dependencies between different components. Understanding these layers is crucial for troubleshooting, capacity planning, and impact analysis.

+-------------------------------------------------------------------+

| Layer 7: Application Layer |

| (e.g., ERP, CRM, Web Applications, SaaS platforms) |

| - Business logic, user interfaces, data processing |

+-------------------------------------------------------------------+

| Layer 6: Presentation Layer (often merged with Application) |

| (e.g., APIs, UI frameworks, data serialization - JSON, XML) |

+-------------------------------------------------------------------+

| Layer 5: Session Layer (often merged with Application/Middleware) |

| (e.g., Connection management, state synchronization) |

+-------------------------------------------------------------------+

| Layer 4: Transport Layer |

| (e.g., TCP, UDP, TLS/SSL for secure transport) |

| - End-to-end communication, reliability, flow control |

+-------------------------------------------------------------------+

| Layer 3: Network Layer |

| (e.g., IP, Routing Protocols - OSPF, BGP) |

| - Addressing, routing, packet forwarding |

+-------------------------------------------------------------------+

| Layer 2: Data Link Layer |

| (e.g., Ethernet, Wi-Fi, MAC Addressing, VLANs) |

| - Frame formation, error detection, physical addressing |

+-------------------------------------------------------------------+

| Layer 1: Physical Layer |

| (e.g., Cables, NICs, Switches, Routers, Fiber Optics) |

| - Transmission of raw bits over a medium |

+-------------------------------------------------------------------+

// --- Infrastructure Components ---

+-----------------------+

| Middleware Layer | (e.g., Application Servers - Tomcat, JBoss; Message Queues - Kafka, RabbitMQ; Load Balancers - HAProxy, Nginx)

+-----------------------+

| Operating System Layer| (e.g., Linux - Ubuntu, CentOS; Windows Server; Hypervisor OS - ESXi)

+-----------------------+

| Virtualization Layer | (e.g., VMware ESXi, KVM, Hyper-V, Xen)

| - Host OS, Guest OS |

+-----------------------+

| Storage Layer | (DAS, NAS - NFS, SMB; SAN - iSCSI, Fibre Channel)

| - Block, File, Object |

+-----------------------+

| Hardware Layer | (Servers, Storage Arrays, Network Devices)

+-----------------------+- Virtualization: Hypervisors abstract hardware, allowing multiple operating systems (guest VMs) to run on a single physical machine (host).

- Type 1 (Bare-Metal): Runs directly on hardware (e.g., VMware ESXi, Microsoft Hyper-V, KVM). Offers better performance and scalability by minimizing overhead. A Type 1 hypervisor has direct access to hardware resources like CPU, memory, and I/O controllers.

- Type 2 (Hosted): Runs on top of a conventional OS (e.g., VMware Workstation, Oracle VirtualBox). Easier for development/testing but incurs more overhead. A Type 2 hypervisor relies on the host OS for hardware access.

- Key Concepts: VM sprawl (uncontrolled proliferation of VMs, leading to resource waste and management complexity), resource contention (CPU ready time, memory ballooning, I/O latency), VM migration (vMotion, live migration for high availability and load balancing), snapshots (point-in-time copies for rollback, but not a substitute for backups). High CPU ready time in a VM indicates that the VM is waiting for CPU resources to become available on the host.

- Storage Architectures:

- DAS (Direct-Attached Storage): Storage directly connected to a single server (e.g., internal hard drives, NVMe SSDs, HBAs - Host Bus Adapters). Simple, high performance for that server, but not shared or easily scalable. A server's internal SSDs are DAS.

- NAS (Network-Attached Storage): File-level storage accessed over a standard network using protocols like NFS (Network File System) or SMB/CIFS (Server Message Block/Common Internet File System). Good for shared file access, typically uses Ethernet. A network share mounted via NFS on Linux clients is an example of NAS.

- SAN (Storage Area Network): Block-level storage accessed over a dedicated, high-speed network (typically Fibre Channel or iSCSI). Presents storage as raw blocks to servers, allowing them to format it with their own file systems. Offers high performance, scalability, and advanced features like LUN masking (controlling which servers can see which LUNs) and zoning (controlling communication between initiators and targets). A Fibre Channel SAN allows multiple servers to access shared LUNs, which can then be formatted with file systems like NTFS or ext4.

- Converged and Hyperconverged Infrastructure (CI/HCI):

- CI: Integrates compute, storage, and networking into a pre-validated, pre-integrated hardware and software stack. Simplifies deployment and management but can have rigid scaling options (scale compute and storage together). A converged appliance might include server blades, storage arrays, and network switches in a single chassis.

- HCI: Extends CI by collapsing compute and storage into software-defined building blocks (nodes). Storage is distributed across nodes, often using software-defined storage (SDS) technologies (e.g., vSAN, Nutanix AOS). Offers greater flexibility and scalability (scale by adding nodes) but requires careful consideration of network design and node failure scenarios. In HCI, each node contributes its local storage to a shared pool, managed by software.

3.2) Service Management Frameworks (e.g., ITIL)

ITIL (Information Technology Infrastructure Library) provides a widely adopted framework for IT Service Management (ITSM), focusing on the delivery of IT services that meet business needs. Key processes include:

- Incident Management: The process of restoring normal service operation as quickly as possible and minimizing the adverse impact on business operations, ensuring that the best possible levels of service quality and availability are maintained.

- Technical Workflow:

- Detection: Monitoring alerts (e.g., Prometheus, Nagios), user reports, automated system checks.

- Logging: Record incident details (timestamp, affected service, user, symptoms, error codes, relevant logs).

- Categorization: Assign to a functional group (e.g., Network, Server, Application, Database).

- Prioritization: Based on impact (number of users/services affected) and urgency (time sensitivity). Common levels: P1 - Critical, P2 - High, P3 - Medium, P4 - Low.

- Diagnosis: Investigate symptoms, gather logs, analyze metrics, test hypotheses. This often involves cross-functional team collaboration.

- Resolution & Recovery: Apply workaround or permanent fix, test thoroughly, confirm service restoration with affected users/systems.

- Closure: Document resolution steps, root cause (if known), update knowledge base.

- Example: A critical web application is inaccessible (HTTP 503 Service Unavailable error).

- Detection: Synthetic monitoring alert fires.

- Diagnosis: Check web server logs (

/var/log/nginx/error.log), application logs, database connection pool status, resource utilization (CPU, RAM, disk I/O) on application and database servers. Use tools liketcpdumpto inspect traffic if network issues are suspected. Anstraceon the web server process might reveal it's stuck waiting on a database connection. - Resolution: If database connection pool is exhausted, restart the database service or scale up DB resources. If application service is unresponsive, restart it. If a load balancer is misconfigured, correct its configuration.

- Technical Workflow:

- Problem Management: The process responsible for managing the lifecycle of all problems. The primary objective of problem management is to find the root cause of incidents and then to initiate actions that will prevent recurrence of those incidents.

- Technical Workflow:

- Problem Identification: Often triggered by multiple related incidents, or proactive analysis of recurring events.

- Problem Logging: Detailed description of symptoms, affected services, and incident history.

- Investigation: Deep dive into logs, performance metrics, system configurations, code analysis, network traces.

- Root Cause Analysis (RCA): Techniques like "5 Whys" (iteratively asking "why" to uncover the underlying cause) or Fishbone diagrams (Ishikawa diagrams) to identify potential causes.

- Workaround Identification: A temporary solution to mitigate ongoing incidents while a permanent fix is developed.

- Known Error Database (KEDB): Documenting problems, their root causes, workarounds, and permanent solutions.

- Permanent Solution (RFC): Proposing a change to fix the root cause. This typically involves submitting a Request for Change (RFC).

- Example: Recurring performance degradation on an e-commerce site during peak sales. RCA reveals inefficient SQL queries that cause database locks, leading to application timeouts. A permanent solution involves query optimization, adding appropriate database indexes, and potentially schema adjustments.

- Technical Workflow:

- Change Management: A controlled process for managing all changes to IT infrastructure and services. Aims to minimize disruption and risk by ensuring changes are properly planned, tested, approved, and implemented.

- Change Record Example (RFC - Request for Change):

{ "change_id": "CHG20231027-001", "title": "Upgrade Kubernetes Cluster to v1.28", "requestor": "DevOps Team", "description": "Upgrade all worker nodes and control plane components to Kubernetes v1.28 for security patches and new features.", "type": "Standard", // Normal, Standard, Emergency "priority": "High", "impact": "High (All services running on the cluster)", "risk": "Medium (Potential for downtime if rolling update fails)", "scheduled_start_time": "2023-10-28T01:00:00Z", "scheduled_end_time": "2023-10-28T04:00:00Z", "backout_plan": "Rollback to previous Kubernetes version by reverting cluster configuration and redeploying node images.", "approval_status": "Pending Review", "affected_configuration_items": ["CI-K8S-CONTROLPLANE-PROD", "CI-K8S-NODE-POOL-A"] }

- Change Record Example (RFC - Request for Change):

- Configuration Management: The process of identifying, controlling, maintaining, and verifying the versions of all configuration items (CIs) within an IT environment. The Configuration Management Database (CMDB) is the central repository.

- Configuration Item (CI): Any component that needs to be managed to deliver an IT service (e.g., servers, applications, databases, network devices, documentation, licenses).

- CMDB Entry Example (JSON):

{ "ci_id": "APP-CRM-PROD-001", "ci_type": "ApplicationInstance", "name": "Salesforce CRM", "version": "Winter '24", "deployed_on": "2023-01-15", "environment": "Production", "dependencies": [ {"ci_ref": "IDP-SSO-PROD", "relationship": "Authenticates via"}, {"ci_ref": "DB-POSTGRES-PROD", "relationship": "Stores data in"} ], "owner_team": "Sales Operations", "status": "Operational", "last_updated": "2023-10-27T10:00:00Z" }

3.3) Automation and Orchestration

Automating repetitive tasks and orchestrating complex workflows is paramount for achieving efficiency, consistency, scalability, and reliability in IT operations.

- Configuration Management Tools: Ensure systems are configured consistently and automatically.

- Ansible: Agentless, uses SSH. Playbooks written in YAML. Idempotent by design.

- **Ansible Playbook Example (

- Ansible: Agentless, uses SSH. Playbooks written in YAML. Idempotent by design.

Source

- Wikipedia page: https://en.wikipedia.org/wiki/Information_technology_management

- Wikipedia API endpoint: https://en.wikipedia.org/w/api.php

- AI enriched at: 2026-03-30T20:23:53.423Z