LMDeploy Vulnerability Exploited Rapidly After Disclosure

LMDeploy Vulnerability Exploited Rapidly After Disclosure

A critical Server-Side Request Forgery (SSRF) vulnerability in the LMDeploy toolkit, affecting how large language models are deployed and served, has been actively exploited in the wild within hours of its public disclosure. This rapid weaponization highlights a growing trend of swift exploitation of security flaws in AI infrastructure.

Published: 2026-04-24 | Author: Patrick Mattos

A significant security flaw within LMDeploy, an open-source framework designed for optimizing, deploying, and serving large language models (LLMs), has been actively targeted by attackers shortly after its public announcement. The vulnerability, identified as CVE-2026-33626, carries a CVSS score of 7.5 and enables attackers to potentially access sensitive information through a Server-Side Request Forgery (SSRF) attack. This rapid exploitation underscores the immediate threat landscape surrounding AI-related infrastructure.

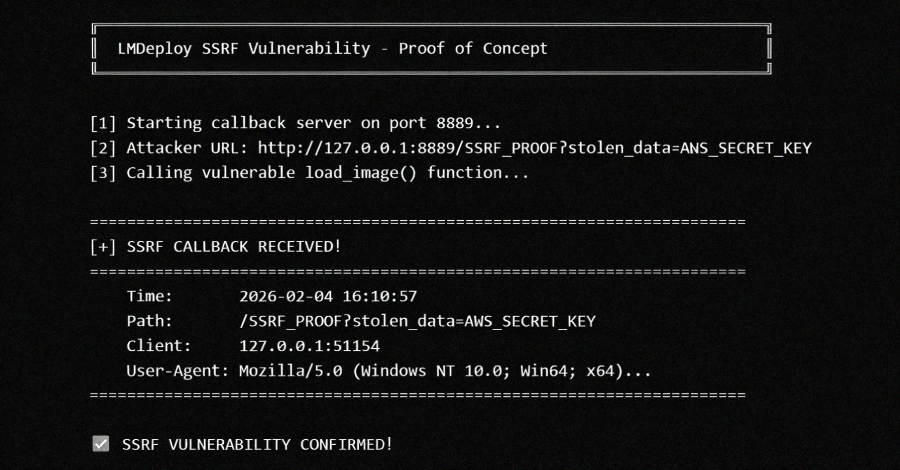

The exploit chain begins with the load_image() function within LMDeploy's vision-language module. This function, as detailed in an advisory from the project maintainers, accepts arbitrary URLs without validating them against internal or private IP addresses. This oversight creates an opening for malicious actors to interact with cloud metadata services, access internal network resources, and potentially exfiltrate sensitive data. All versions of the LMDeploy toolkit up to and including 0.12.0 that feature vision language support are believed to be affected.

Technical Context

The identified vulnerability, CVE-2026-33626, is a classic Server-Side Request Forgery (SSRF) issue. Attackers can leverage the load_image() function in lmdeploy/vl/utils.py to trick the server into making requests to internal or external resources that it would normally not have access to. This can include cloud provider metadata endpoints (like AWS IMDS), internal databases, or other sensitive services exposed only within the private network.

Security researchers at Sysdig observed exploitation attempts within approximately 12 hours and 31 minutes of the vulnerability's disclosure. The observed attack activity, originating from IP address 103.116.72[.]119, demonstrated a sophisticated approach over an eight-minute period. The attacker utilized the vision-language module as a generic SSRF primitive to probe the internal network. This included attempts to access the AWS Instance Metadata Service (IMDS), scan for services like Redis and MySQL, interact with a secondary HTTP administrative interface, and establish an out-of-band (OOB) DNS exfiltration channel. The attacker also switched between different vision-language models, such as internlm-xcomposer2 and OpenGVLab/InternVL2-8B, potentially to evade detection.

This pattern of rapid exploitation, even without a readily available proof-of-concept (PoC) exploit at the time of the attack, is becoming increasingly common in the AI infrastructure space. Advisories that provide detailed information, including affected files, parameters, root cause analysis, and sample vulnerable code, can serve as direct prompts for LLMs to generate exploit code.

Impact and Risk

The successful exploitation of CVE-2026-33626 poses a significant risk to organizations deploying LLMs. The potential impacts include:

- Credential Theft: Attackers can steal cloud credentials by accessing metadata services, leading to unauthorized access to cloud resources.

- Internal Network Reconnaissance: The vulnerability allows attackers to perform port scanning and discover internal services not exposed to the public internet.

- Lateral Movement: Gaining access to internal systems can facilitate further compromise and lateral movement within the network.

- Data Exfiltration: Sensitive data residing on internal systems or accessible via metadata services could be stolen.

The rapid exploitation suggests that threat actors are actively monitoring disclosures related to AI infrastructure and are quick to weaponize newly found vulnerabilities. The severity is rated as high (CVSS 7.5), indicating a substantial risk to affected systems.

Defensive Takeaways

Organizations utilizing LMDeploy or similar LLM deployment frameworks should prioritize the following defensive measures:

- Patch Management: Immediately update LMDeploy to a version that addresses CVE-2026-33626. If immediate patching is not feasible, implement compensating controls.

- Network Segmentation: Enforce strict network segmentation to limit the blast radius of any potential compromise. Internal services should not be accessible from untrusted network segments.

- Access Control: Implement robust access controls and authentication for all internal services, including metadata services.

- Intrusion Detection and Prevention: Deploy and configure intrusion detection and prevention systems (IDPS) to monitor for suspicious network traffic patterns, including SSRF attempts and internal port scanning.

- Vulnerability Scanning: Regularly scan your infrastructure for known vulnerabilities and ensure timely remediation.

- Security Monitoring: Enhance security monitoring for LLM inference servers and related infrastructure, paying close attention to unusual network requests or data exfiltration attempts.